I've started mapping something I keep seeing.

Every school district I talk to — whether it's a 3-campus charter network or a 30,000-student unified district — falls into one of four stages when it comes to AI. Not where they say they are. Where they actually are, based on what's happening in classrooms every day.

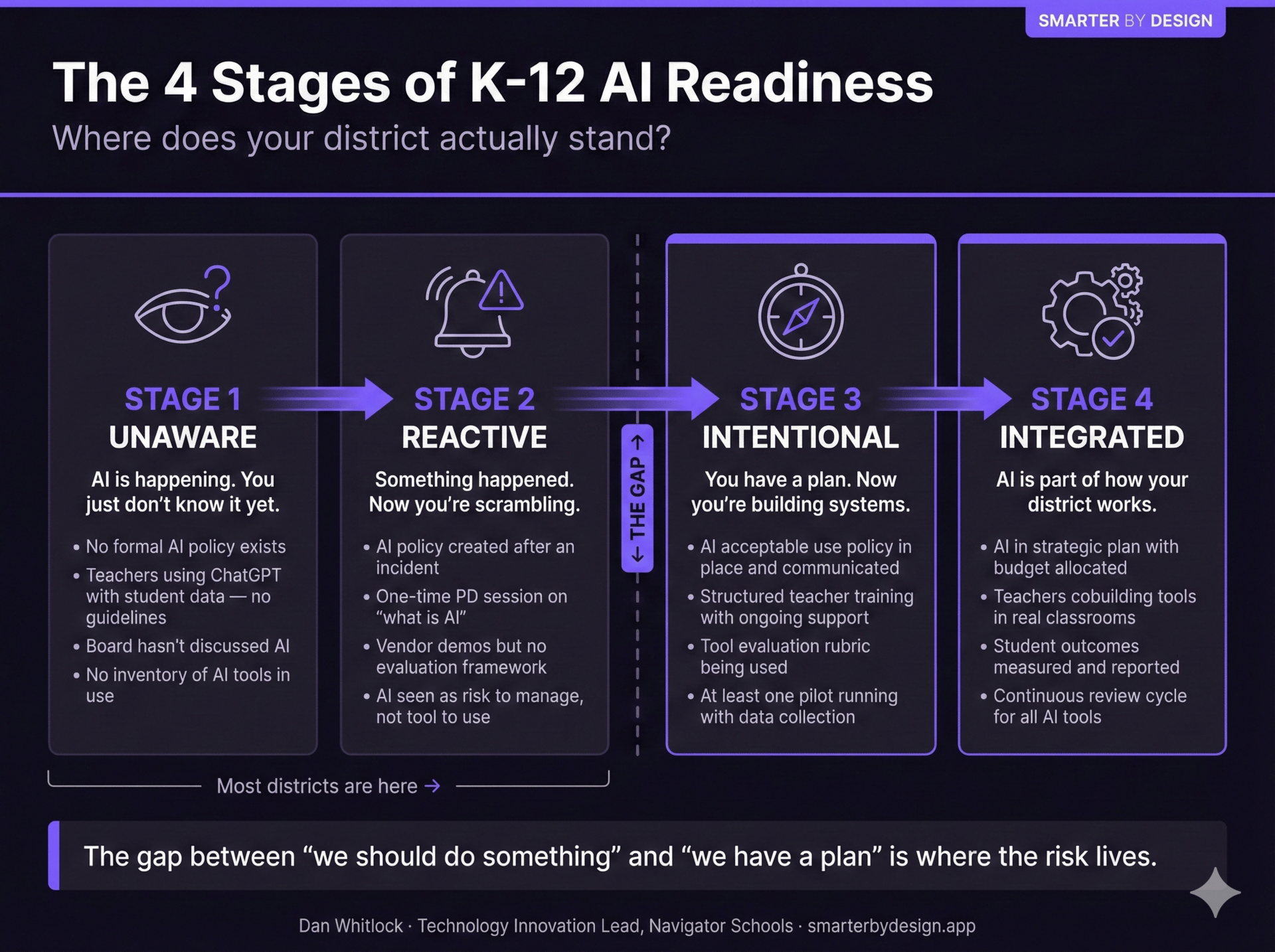

Here's the framework.

Stage 1: Unaware. AI is already in the building. Teachers are pasting student essays into ChatGPT for feedback. Someone in the front office is using it to draft parent emails. An instructional coach found a free summarizer tool and shared it in a group chat. None of this is malicious. All of it is invisible to leadership. There's no policy because no one thinks they need one yet.

Stage 2: Reactive. Something happened. Maybe a parent found out their kid's writing was being run through an AI tool. Maybe a teacher posted about it on social media. Maybe a board member read a scary headline and asked questions no one could answer. Now there's a scramble — a hastily written paragraph added to the existing tech AUP, a one-hour PD session on "what is AI," and a vague sense that someone should probably be paying attention to this.

Stage 3: Intentional. This is where it starts to feel like a strategy instead of a reaction. There's a real AI acceptable use policy — one that addresses data classification, tool approval workflows, and what happens when something goes wrong. Teacher training is ongoing, not a one-time event. There's at least one structured pilot running with actual data collection. Leadership can name the AI tools in use across the district. Not all of them. But most.

Stage 4: Integrated. AI is in the strategic plan. There's budget behind it. Teachers aren't just using tools — they're involved in shaping them. Student outcomes are being measured. There's a review cycle that sunsets tools that aren't earning their place. This is rare. I can count on one hand the districts I've seen operating here consistently.

Here's why I think this framework matters: most districts I talk to are somewhere between Stage 1 and Stage 2. And the gap between those two stages and Stage 3 is enormous.

It's not a technology gap. It's a visibility gap.

At Navigator Schools, we were able to move through these stages faster than most — not because we had more money or better technology, but because we had visibility into what was actually happening. We knew which tools teachers were using. We knew which workflows were painful enough to warrant building something new. We knew where student data was going and where it wasn't.

That visibility didn't come from an audit or a vendor assessment. It came from being in classrooms. Talking to teachers. Watching where they lost time, where they improvised, where they quietly used whatever tool they could find because the official options weren't solving the problem.

The pattern I keep seeing in Stage 1 and Stage 2 districts is the same: leadership is making decisions about AI based on what vendors are telling them, not what teachers are showing them.

A vendor demo looks great. Polished interface, impressive features, a case study from a school you've heard of. But that demo doesn't know that your 3rd grade team has 45 minutes of uninterrupted instructional time and the tool requires a 10-minute setup. It doesn't know that your teachers are already using three platforms and can't absorb a fourth. It doesn't know that the real bottleneck isn't "finding an AI tool" — it's the 30 minutes a day teachers spend on documentation that could be handled differently.

The move from Stage 2 to Stage 3 isn't about buying better technology. It's about asking better questions.

Questions like:

What AI tools are our teachers already using — and why did they choose those tools over what we've provided?

When a teacher finds a new AI tool, what happens next? Is there a process? Or does everyone just wing it?

If a student's data ended up in a system we didn't approve, would we even know?

Which of our daily workflows are so painful that teachers are solving them with whatever they can find — and what would it look like to solve them intentionally?

I don't ask those questions to make anyone feel behind. I ask them because the districts that answer them honestly are the ones that move. The ones that skip them end up buying something shiny in January that nobody's using by March.

If you read this and thought, "I'm not totally sure where we'd fall" — that's actually the right response. It means you're being honest.

I built a tool for exactly this moment. It's called the K-12 AI Readiness Checklist — a free self-assessment across six dimensions: policy, privacy, teacher readiness, student-facing AI, tool governance, and leadership vision. No vendor pitch. Just a structured way to figure out where you actually stand.

You can grab it here: checklist.smarterbydesign.app

Fill it out with your leadership team. Twenty minutes. Then you'll know where to start.

— Dan

P.S. If your checklist surfaces more gaps than you expected — that's the point. I offer a free 30-minute readiness review for district leaders who want to talk through their results. No pitch, no pressure. Just a conversation. Details at explorenovapath.com